Val spark:SparkSession = SparkSession.builder() The text file used here is available at the GitHub and, the scala example is available at GitHub project for reference. In this section, I will explain a few RDD Transformations with word count example in scala, before we start first, let’s create an RDD by reading a text file. Betterment acheives by reshuffling the data from fewer nodes compared with all nodes by repartition. Similar to repartition by operates better when we want to the decrease the partitions. This operation reshuffles the RDD randamly, It could either return lesser or more partioned RDD based on the input supplied. Return a dataset with number of partition specified in the argument. Returns the dataset by eliminating all duplicated elements. Returns the dataset which contains elements in both source dataset and an argument This is similar to union function in Math set operations. For example rdd.randomSplit(0.7,0.3)Ĭomines elements from source dataset and the argument and returns combined dataset.

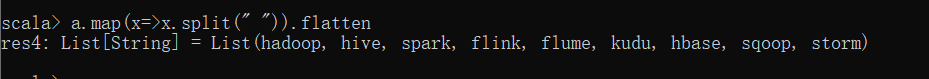

Splits the RDD by the weights specified in the argument. Similar to map Partitions, but also provides func with an integer value representing the index of the partition. Similar to map, but executs transformation function on each partition, This gives better performance than map function In other words it return 0 or more items in output for each element in dataset.Īpplies transformation function on dataset and returns same number of elements in distributed dataset. Returns flattern map meaning if you have a dataset with array, it converts each elements in a array as a row.

Returns a new RDD after applying filter function on source dataset. Note: When compared to Narrow transformations, wider transformations are expensive operations due to shuffling. Since these shuffles the data, they also called shuffle transformations.įunctions such as groupByKey(), aggregateByKey(), aggregate(), join(), repartition() are some examples of a wider transformations. Wider transformations are the result of groupByKey() and reduceByKey() functions and these compute data that live on many partitions meaning there will be data movements between partitions to execute wider transformations. Narrow transformations are the result of map() and filter() functions and these compute data that live on a single partition meaning there will not be any data movement between partitions to execute narrow transformations.įunctions such as map(), mapPartition(), flatMap(), filter(), union() are some examples of narrow transformation Wider Transformation Since RDD’s are immutable, any transformations on it result in a new RDD leaving the current one unchanged. RDD Transformations are lazy operations meaning none of the transformations get executed until you call an action on Spark RDD. Transformation functions with word count examples.In this tutorial, you will learn lazy transformations, types of transformations, a complete list of transformation functions using wordcount example in scala. RDD Lineage is also known as the RDD operator graph or RDD dependency graph. Since RDD are immutable in nature, transformations always create new RDD without updating an existing one hence, this creates an RDD lineage. RDD Transformations are Spark operations when executed on RDD, it results in a single or multiple new RDD’s.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed